AI in Clinical Trials: Why FDA’s Risk-Based Credibility Framework Matters

AI isn’t quietly creeping into clinical trials it’s arriving at full speed. From recruitment prediction to imaging endpoint derivation, sponsors are experimenting with machine learning across the drug development lifecycle. But not all AI carries the same risk and that’s where FDA’s new draft guidance is a major step forward.

AI isn’t quietly creeping into clinical trials it’s arriving at full speed. From recruitment prediction to imaging endpoint derivation, sponsors are experimenting with machine learning across the drug development lifecycle. But not all AI carries the same risk and that’s where FDA’s new draft guidance is a major step forward.

The Core Idea: Risk-Based Credibility

The FDA’s risk-based credibility assessment framework starts with a simple question:

What is this AI being used for?

And from there, it scales the burden of proof. The higher the potential impact on patient safety or regulatory decision-making, the stronger the evidence and documentation sponsors must provide.

This framework introduces a structured 7-step process:

Define the question of interest – Be explicit about the decision the AI is informing.

Define the context of use (COU) – Describe exactly how and where the model’s outputs will be applied.

Assess model risk – Consider both the influence of the model (how much it drives the decision) and the consequence of getting it wrong.

Develop a credibility assessment plan – Outline data quality, validation metrics, and governance activities tailored to model risk.

Execute the plan – Generate and document evidence.

Document results and deviations – Produce a credibility assessment report.

Decide adequacy – Determine whether the model is appropriate for its COU or requires mitigation, additional evidence, or redesign.

Context of Use: The Pivot Point

The framework’s cornerstone is context of use. This is where sponsors describe not just what the AI does but also how its outputs influence trial decisions.

Examples:

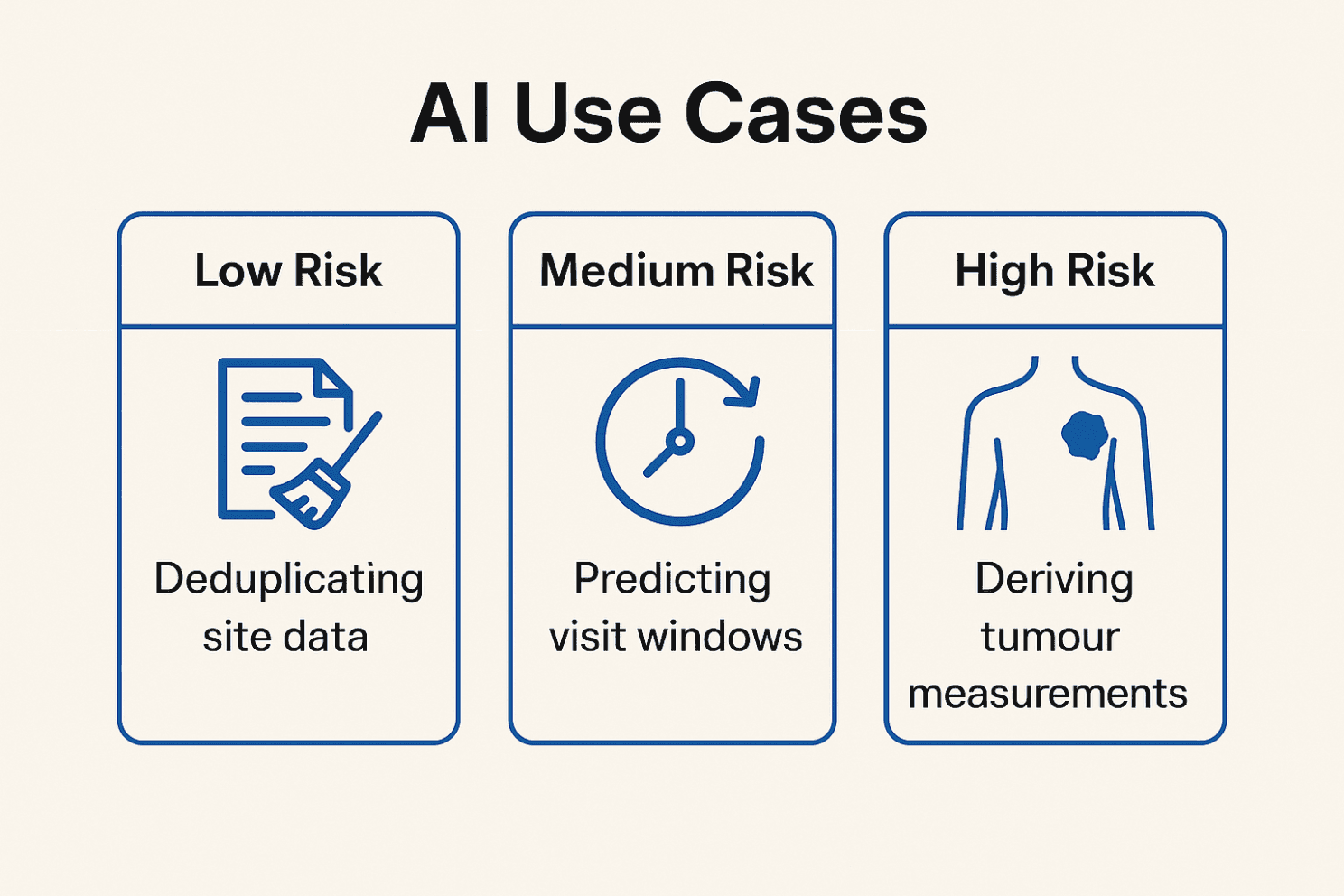

Administrative: deduplicating site data → low impact

Operational: predicting visit windows → moderate impact

Clinical / Regulatory: deriving tumour measurements for endpoints → high impact

Your COU determines the level of validation, documentation, and FDA interaction expected.

Continuous Lifecycle Management

AI isn’t static. The FDA explicitly calls for ongoing monitoring, drift detection, and version control not a one-time validation.

Sponsors are expected to:

Use representative, high-quality datasets for training and testing.

Track performance over time, retrain when needed, and re-validate when model changes could affect outputs.

Maintain audit trails, governance policies, and human override mechanisms.

What Sponsors Should Deliver

FDA recommends preparing a Credibility Assessment Plan that includes:

Transparency: clear description of model inputs, architecture, and assumptions.

Data provenance: where the data came from and how it represents the target population.

Validation metrics: accuracy, sensitivity/specificity, reproducibility.

Bias checks: evidence of fairness across demographics.

Uncertainty management: how error margins are communicated and used in decisions.

Change governance: version control, retraining triggers, and documentation.

Human oversight: when and how decisions can be escalated or overridden.

Why This Matters

This guidance doesn’t just raise the compliance bar it encourages earlier, more strategic dialogue with the FDA. Sponsors that map their AI tools to risk categories early in development can avoid painful surprises when submitting NDAs/BLAs.

It also harmonises with EMA/ICH moves toward transparency, helping global trial sponsors build a unified governance approach.

Bottom Line

Think of this not as red tape, but as credibility insurance. If your model drives patient inclusion/exclusion, endpoint adjudication, or supports a regulatory filing, you want regulators and investigators to trust it.

The FDA’s message is clear: the higher the stakes, the higher the bar. Treat AI governance as a living process, not a box to tick. Your future submissions (and your reputation) will thank you.

Reference:FDA (2025). Considerations for the Use of Artificial Intelligence to Support Regulatory Decision Making for Drug and Biological Products. Draft Guidance, Jan 6, 2025. Link to full guidance