>

>

AI prescreens cancer patients with ~99% accuracy and cutting manual review time from 20 minutes to 43 seconds

AI prescreens cancer patients with ~99% accuracy and cutting manual review time from 20 minutes to 43 seconds

If clinical trials were a game of Where’s Waldo?, eligibility criteria would be Waldo’s sunglasses: tiny, easy to miss, and capable of derailing your search if you overlook them. For decades, finding the right participants i.e. the people who actually meet the labyrinthine inclusion and exclusion rules, has been a slow, painstaking quest. And while sites and sponsors pour hours of human effort into sifting through charts, an elephant has quietly stepped into the room wearing a speed-boosted jersey: artificial intelligence.

When AI Says “You’re In,” Faster: Inside the New Era of Trial Eligibility Prescreening

If clinical trials were a game of Where’s Waldo?, eligibility criteria would be Waldo’s sunglasses: tiny, easy to miss, and capable of derailing your search if you overlook them. For decades, finding the right participants i.e. the people who actually meet the labyrinthine inclusion and exclusion rules, has been a slow, painstaking quest. And while sites and sponsors pour hours of human effort into sifting through charts, an elephant has quietly stepped into the room wearing a speed-boosted jersey: artificial intelligence.

In the most recent work to land on my desk, a team of researchers built an AI-powered workflow capable of prescreening patients for trial eligibility in oncology with near-perfect accuracy, dramatically shrinking what used to take minutes into seconds. That’s not incremental improvement; that’s changing the tune of trial operations. (arXiv)

Let’s unpack how this leap in AI is rewriting the rulebook on who gets into a trial and what that means for patients, sites, and sponsors alike.

1. Why Eligibility Prescreening Has Always Been Hard

Eligibility in clinical trials isn’t a simple checklist. It’s a dense forest of medical nuance: prior therapies, lab values, co-morbidities, symptom thresholds, histories, and more. Traditionally, eligibility assessment has been done by humans combing through electronic health records (EHRs), pathology reports, physician notes, and other semi-structured data — a process that is labour-intensive and error-prone.

Real-world data and retrospective studies suggest that manual prescreening can miss eligible participants, delay enrolment, and stymie overall timelines — even in well-resourced sites. (PMC)

Consider this: a single trial’s manual eligibility check can take 15–20 minutes per patient-trial pair. Multiply that by hundreds or thousands of potential participants and suddenly you’re looking at days or weeks of work before enrolment even begins. Enter AI: a system designed to parse data with the speed of an algorithm and the interpretive nuance of a human reviewer.

2. Case Example: MSK-MATCH and Rapid AI Prescreening

A recent preprint describes a real implementation of such a system called MSK-MATCH — a workflow that automates eligibility screening for breast cancer clinical trials using a combination of a large language model (LLM) and an oncology trial knowledge base. (arXiv)

Researchers applied MSK-MATCH retrospectively to 88,518 clinical documents spanning six breast cancer trials involving 731 patients. The AI system classified eligibility with astonishing performance:

98.6 % accuracy

98.4 % sensitivity

98.7 % specificity And perhaps most impactful of all:

Average screening time went from ~20 minutes per patient-trial pair to ~43 seconds. (arXiv)

That’s roughly a 30–40× speed improvement without sacrificing precision.

The architecture wasn’t a black box either. The model’s predictions were grounded in explainable output, linking its determinations back to specific clinical text, meaning study staff could trust and verify AI decisions rather than treating them as inscrutable code-generated guesses. This blend of speed and transparency is critical for clinical adoption.

Real-world parallels have been emerging in randomised prospective settings. In a heart failure trial, researchers deployed a different LLM-based screening tool called RECTIFIER that assessed clinical notes and structured data to rapidly determine eligibility. Compared with manual screening, AI not only sped up the process, it increased the proportion of eligible patients identified and led to more actual enrolments. (The Hospitalist)

Taken together, these examples show that AI isn’t just fast, it can be clinically effective.

3. What This Means for Clinical Trial Operations

A. Time and Cost Savings

In the MSK-MATCH example, the nearly 20-minute manual review was compressed to under a minute. Multiply that across a single trial’s dataset and the impact is dramatic: less burden on study coordinators, fewer bottlenecks at the site, and earlier enrolment windows.

Similarly, in the heart failure study, faster eligibility determination suggests that trial timelines — often measured in months — can be tightened meaningfully. That doesn’t just translate to operational KPIs; it translates to patients reaching potential therapies sooner.

B. Quality and Consistency

AI systems excel not because humans are bad, but because humans get tired and inconsistent. Algorithms, once trained and validated, provide uniform application of criteria across all records. This consistency reduces variance between sites and rater bias, both frequent causes of protocol amendments and quality control queries.

C. New Operational Roles for Humans

AI doesn’t replace clinical judgment it augments it. In all these workflows, AI flagged the bulk of clear matches while triaging uncertain cases back to humans for review. This “human-in-the-loop” model sustains oversight while turbo-charging workflow throughput.

For trial teams, this shift opens opportunities for staff to focus on high-value tasks like patient engagement or complex discrepancy resolution rather than repetitive chart review.

Solution / CTA: How Teams Should Prepare

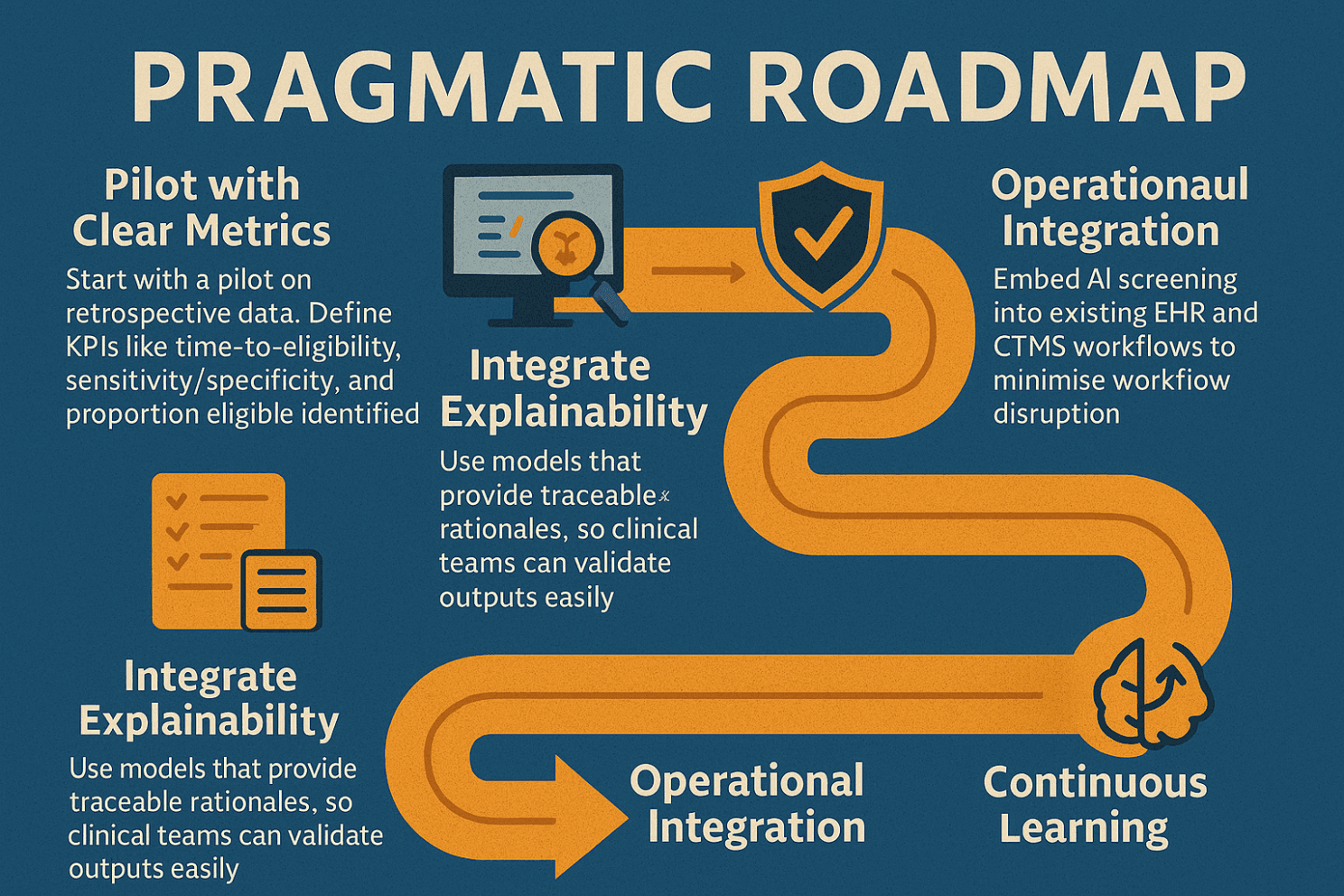

If this trend holds, and evidence strongly suggests it will, sponsors and sites need to start embracing AI eligibility tools thoughtfully. Here’s a pragmatic roadmap:

Pilot with Clear Metrics Start with a pilot on retrospective data. Define KPIs like time-to-eligibility, sensitivity/specificity, and proportion eligible identified.

Integrate Explainability Use models that provide traceable rationales, so clinical teams can validate outputs easily.

Governance & Validation As regulators increasingly focus on AI transparency and data integrity, ensure any AI solution has robust validation and audit trails.

Operational Integration Embed AI screening into existing EHR and CTMS workflows to minimise workflow disruption.

Continuous Learning Use post-deployment performance data to refine models and criteria mappings over time.

This isn’t speculative tech. The data from the breast-cancer screening workflow and the heart failure prospective trial shows real, measurable benefits. Teams that begin now will be best positioned to reap the operational efficiencies.

Conclusion: A New Tempo for Trial Enrolment

Clinical trials have long been held back by the slow rhythm of eligibility screening. What used to be a manual, labour-intensive chore is now poised to become a swift, intelligent prelude to enrolment, driven by AI workflows that can classify cases with near-human accuracy in a fraction of the time.

This shift doesn’t just speed operations; it enhances consistency, elevates quality, and frees clinical teams to spend their time where human judgment matters most.

The future of eligibility prescreening is not about replacing humans with machines. It’s about letting machines handle the grunt work so humans can do the thinking, strategising, and patient-facing care that truly advances science and health.

Get ahead of the bottleneck. Let AI open the door.

References

Rosenthal J et al., AI-Assisted Workflow Enables Rapid, High-Fidelity Breast Cancer Clinical Trial Eligibility Prescreening — arXiv (Nov 2025) Read the MSK‑MATCH study on arXiv

Unlu O et al., AI-Assisted Screening Tool Speeds Trial Eligibility Determination — JAMA / ClinicalAdvisor (2025) AI‑Assisted Screening Tool Speeds Eligibility Determination

Parikh RB, Human-AI Teams Improve Accuracy & Timeliness of Oncology Trial Prescreening — JCO publication (2024) (ascopubs.org)

Lu X et al., Artificial Intelligence for Optimizing Recruitment and Retention in Trials — PMC review (2024) Read the PMC AI Recruitment Review