>

>

ChatGPT Is Changing How We Work: What the OpenAI/Harvard Study Means for Clinical Research

ChatGPT Is Changing How We Work: What the OpenAI/Harvard Study Means for Clinical Research

A recent working paper by OpenAI in partnership with Harvard’s David Deming sheds new light on how people are really using ChatGPT and there are important takeaways for clinical/research settings. Here’s what the data shows, and why it matters for clinical practice, digital health, and patient care.

A recent working paper by OpenAI in partnership with Harvard’s David Deming sheds new light on how people are really using ChatGPT and there are important takeaways for clinical/research settings. Here’s what the data shows, and why it matters for clinical practice, digital health, and patient care.

What the Study Found

OpenAI’s latest research (in collaboration with Harvard) analysed 1.5 million ChatGPT chats from everyday, non-enterprise users between May 2024 and July 2025.

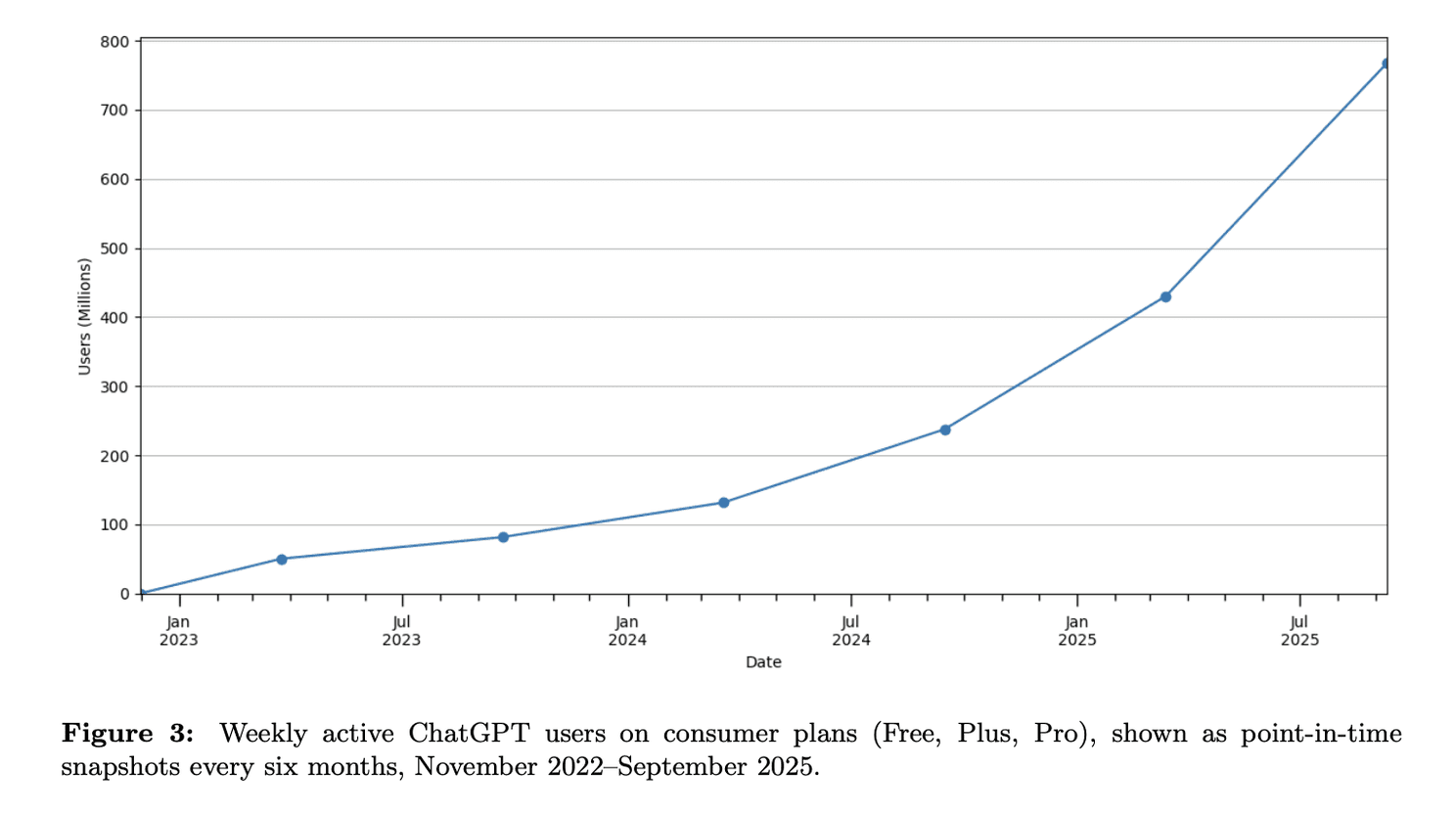

Weekly active ChatGPT users

Top Use Cases

Practical Guidance (28.3%) Think: how-to advice, fitness tips, self-care routines, even dinner recipes.

Writing Support (28.1%) From drafting emails to editing reports, writing remains one of the top drivers of usage.

Seeking Information (21.3%) Users ask for facts, data points, definitions, with GPT acting like a research assistant.

Technical Help (4–5%) Includes coding, debugging, and navigating tech problems.

Self-Expression & Chitchat (<5%) A smaller share is used for journaling, greetings, or reflection.

Who’s Using It and How

More Women Than Men As of mid-2025, over 50% of users (with identifiable names) are women, a notable shift from earlier gender imbalances.

Rapid Growth in LMICs Adoption is growing fastest in lower- and middle-income countries, where GPT fills key information gaps.

Mostly Non-Work Use Around 70% of use is personal or non-work-related, though a full 30% is clearly work-focused.

How People Engage With GPT

49% Asking Seeking advice, explanations, or clarifications.

40% Doing Performing tasks: writing, summarising, planning, coding.

11% Expressing Reflecting, storytelling, personal expression.

Implications for Clinical / eHealth / eClinical Research

Supporting Patient Education & Self‑Care The high share of “practical guidance” suggests many users are turning to ChatGPT for health, fitness, beauty, or self‑care questions. There’s potential (and risk) here: reliable, accessible info could help patients, but misinformation risk is real. Clinicians and digital health services should consider how ChatGPT (and similar tools) might augment patient education, perhaps via curated content, or protocols to direct patients to validated sources.

Writing & Documentation Support A significant portion of usage is writing: summarising, editing, communication. For clinical researchers and practitioners, this could mean drafting reports, patient communication, grant or manuscript writing, etc. There's room to integrate ChatGPT into workflows to save time, improve clarity, reduce administrative burden, but with oversight.

Information Seeking vs Task Execution The fact that “asking” (information/advice) is the biggest category means clinicians and researchers are (or could be) major users for literature searches, clinical guidelines, summarisation of evidence. Meanwhile, the “doing” category (task‑oriented) offers possibilities for automation of parts of research, data preparation, report generation.

Non‑Work Usage Has Spillover Effects Since ~70% of usage is personal/non‑work, many clinicians are likely using ChatGPT outside work for their own learning, reflection, well‑being, etc. Understanding that so much AI exposure is happening in personal domains means that trust, digital literacy, expectations of accuracy will shape how clinicians approach and adopt AI in professional settings.

Geographic / Access Equity With faster growth in lower income countries, there's a chance for AI tools to help reduce information and resource gaps , for instance, clinical guidance in places where access to medical libraries or rapid expert consultation is limited. But it also raises issues of language support, correctness, and safe use.

Caveats & Risks

The study is based on consumer, non‑enterprise data. That means health‑sensitive uses, regulated content, patient data interactions might be underrepresented or absent.

Accuracy & reliability: users frequently ask for advice; but ChatGPT is not a substitute for professional judgment. Erroneous information or hallucinations remain possible.

Privacy & safety: in clinical and patient contexts, confidentiality and correct handling of sensitive data are critical.

Ethical concerns: over-dependence, misinformation, displacement of critical thinking, and digital divide risks.

What This Means for eClinical Edge Readers

As you develop, implement, or evaluate AI tools in clinical trials, electronic health records, telemedicine, or patient support:

Consider embedding features that help users verify or reference authoritative medical sources.

Use ChatGPT‑type tools alongside expert oversight rather than replacing any component of clinical judgment.

Train staff / researchers in digital health literacy: understanding strengths & limits of AI.

Monitor use in patient‑facing applications carefully, especially in non‑work settings (self‑care, mental health) where users may not have access to commentary or correction.

Key Takeaways

ChatGPT is increasingly being used for everyday, non‑work tasks, but there remains strong usage in writing, seeking information, and practical guidance.

Its role in clinical settings is more indirect so far, mostly in supporting tasks rather than direct patient care, but the foundation is there.

Ensuring safe, accurate, equitable use will require thoughtful design, policies, and oversight.

Sources

OpenAI (September 2025). How People Use ChatGPT. By Aaron Chatterji¹˒², Tom Cunningham¹, David Deming³, Zoë Hitzig¹˒³, Christopher Ong¹˒³, Carl Shan¹, and Kevin Wadman¹ ¹OpenAI · ²Duke University · ³Harvard University

Full report PDF: https://cdn.openai.com/pdf/a253471f-8260-40c6-a2cc-aa93fe9f142e/economic-research-chatgpt-usage-paper.pdf

Summary webpage: https://openai.com/index/how-people-are-using-chatgpt