How We Shift Our Clinical Trial Organisation’s Mindset to Embrace the Change of AI

Picture the clinical trial ecosystem as a convoy of ships at sea: Sponsors chart the course, CROs manage the fleet, sites handle passengers, CDMOs load the cargo, and tech vendors supply the navigation tools. For years, each ship has sailed in tight formation, following orders from the flagship. But now a storm called AI has arrived and rigid formations can’t adapt quickly enough. To thrive, we don’t just need better ships or smarter tools. We need a new mindset across the whole fleet.

Picture the clinical trial ecosystem as a convoy of ships at sea: Sponsors chart the course, CROs manage the fleet, sites handle passengers, CDMOs load the cargo, and tech vendors supply the navigation tools. For years, each ship has sailed in tight formation, following orders from the flagship. But now a storm called AI has arrived and rigid formations can’t adapt quickly enough.

To thrive, we don’t just need better ships or smarter tools. We need a new mindset across the whole fleet.

1. The Core Challenge: Rigid Structures in a Fluid World

Clinical research organisations are excellent at process discipline, necessary in a regulated environment. But the “instruction-then-action” posture can slow us down when participant needs, regulatory expectations, and AI tools evolve weekly.

Sponsors risk delaying portfolio decisions while waiting for layers of approval.

CROs often focus on delivery against contract terms, not rapid experimentation.

Sites can become trapped in SOPs that discourage local problem-solving.

CDMOs and vendors may hesitate to test new models for fear of disrupting validated processes.

The result? We meet compliance standards, but fall short on speed, creativity, and participant...the very things AI could accelerate if paired with more autonomy.

2. What the Research Suggests

Autonomy with guardrails outperforms rigidity. Teams given clear objectives and bounded decision rights adapt faster and deliver more (Harvard Business Review).

Psychological safety fuels innovation. From sites to sponsor study teams, people innovate when they can voice risks and test ideas without blame (PLOS).

Agile organisations weather volatility. Deloitte shows that talent-centred, flexible models outperform traditional hierarchies in turbulent markets.

Culture eats tools for breakfast. Without explicit structures—fast-track approvals, innovation credits, cross-functional squads—AI adoption stalls (Forbes).

3. A New Archetype for Clinical Trials

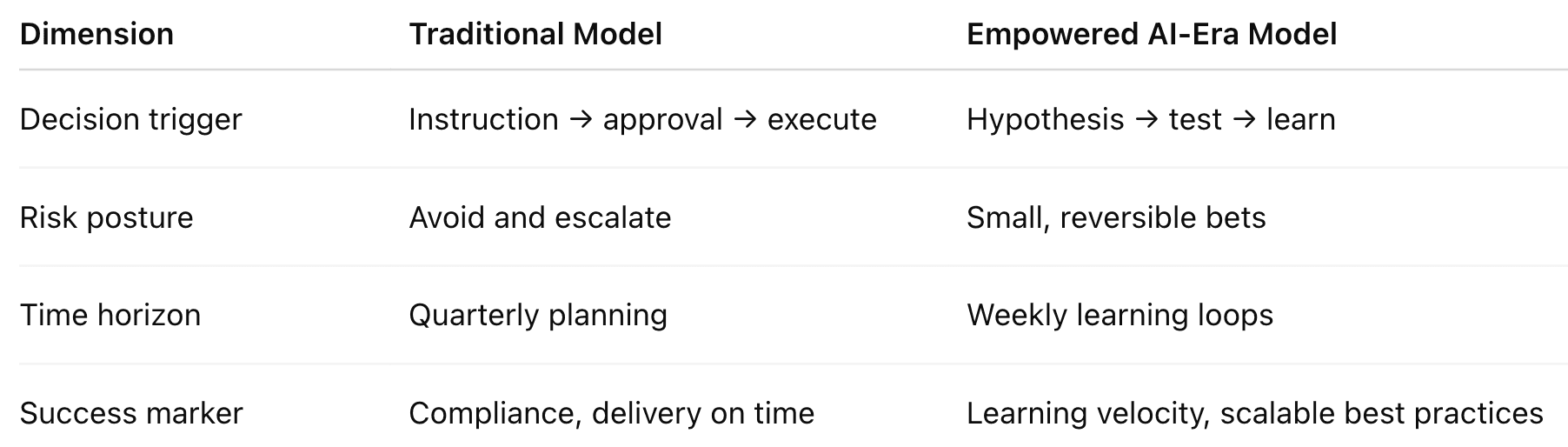

New Archetype of Trials

The point isn’t to abandon structure...compliance and quality are non-negotiable. It’s about rebalancing: giving more local decision rights, small budgets for experimentation, and faster feedback loops at every level of the trial ecosystem.

NB Caveat: risk where risk is possible - not where it isn't.

4. What This Looks Like in Practice

Sponsors empowering portfolio teams to pilot AI-assisted trial design and share lessons quickly.

CROs standing up cross-functional squads with autonomy to test recruitment tactics or monitoring models.

Sites using rapid-test lanes for new patient engagement approaches, rather than waiting months for protocol amendments.

CDMOs and vendors trialling digital supply tracking or predictive analytics in controlled, reversible pilots.

These aren’t sweeping transformations, they’re small, safe steps that build organisational muscle for change.

5. Why It Matters for AI

AI won’t deliver value if it’s bolted onto rigid organisations.

Predictive recruitment, automated data cleaning, or generative protocol drafting all require humans ready to act on signals, test new workflows, and scale what works. The winners in this next era won’t just be those who adopt AI first, it will be those who shape cultures where experimentation, autonomy, and psychological safety are standard operating procedure.

Conclusion

AI is not only a technological shift. It’s a mindset shift that spans the entire clinical trial ecosystem.

To compete, Sponsors, CROs, Sites, CDMOs, and vendors alike must:

Empower teams with autonomy within guardrails.

Build psychological safety into daily routines.

Recognise learning velocity as a metric of success.

Create explicit structures for experimentation.

If you have read this far you may be interested to learn that I have developed an

AI Readiness Culture Assessment (12-Step Test)

This 12-item assessment uses Likert-scale (1–5) statements to evaluate how prepared an organisation’s culture and infrastructure are for AI adoption, especially in a clinical research setting.

If you would like to access the test, comment on the post and I'll DM it to you.

Thanks for reading.

References

Harvard Business Review — “How Successful Sales Teams Are Embracing Agentic AI” (2025); “Why Some Sales Teams Are Actually Growing Alongside AI” (2025). hbr.org+1

Deloitte — 2025 Global Human Capital Trends (agility and stability); press note on 2024 Human Capital Trends (trust, human performance). Deloitte+1

PLOS ONE (2024) — Team psychological safety → employee innovative performance via communication (empirical). PLOS

HBR — “When Autonomy Helps Team Performance (and When It Doesn’t)” (framework for bounded autonomy). hbr.org

Forbes — Practical mechanisms for building innovation culture (explicit cross-functional support). Forbes

McKinsey — Talent as strategy; frontline empowerment and productivity emphasis (2025). McKinsey & Company